What is containerization and how to tailor it for your use case

Written by Saliha Sajid

Containerization is the process of running applications in isolated self-contained environments called containers. This approach encapsulates the application logic and its dependencies, allowing it to run independently of the underlying infrastructure.

While containerizing applications might not be feasible for every use case out there, there are several reasons as to why you should be containerizing your applications within data extraction and processing. Some of them are listed below:

Portability: One container image can be run with any container orchestrator as well as in any environment

Decoupled architecture: Separation of concerns between application and infrastructure layer

Simpler management: Ability to upgrade or roll back in case of failure

Fast delivery: Fast modification, deployment and shipping of applications

Which applications to containerize?

The question stands: which applications to containerize? Well, microservices are one of the most obvious candidates for containerization. Microservices are loosely coupled, autonomous applications which can be developed, tested, deployed and scaled independent of each other. Microservices in data engineering allow one to split monolithic data pipelines into smaller services and provide reusable components.

Another example when containerization is particularly useful is when you would like to run multiple instances of the same application but with different configurations. In such cases when business logic is separated from the configuration, a solution like Kubernetes’ ConfigMaps can help manage the different configurations and seamlessly set the wheels in motion without the need of redeploying the application.

Where to deploy the containerized application?

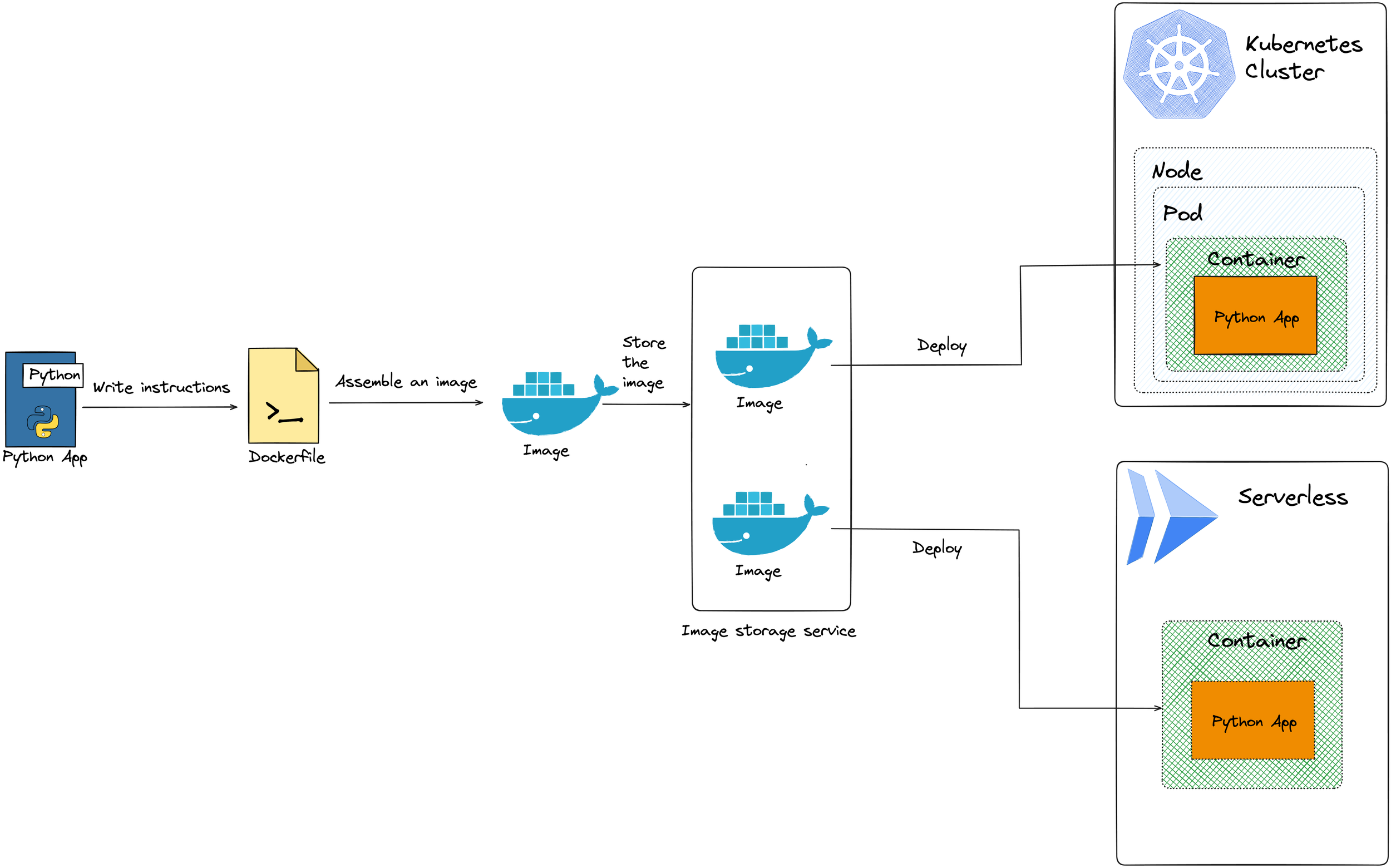

For getting started with containerization, Docker is a great platform to create and run an application as a self-sufficient container. Once we have containerized an application, the next step is to deploy it. In recent years, a good chunk of applications is deployed on public cloud due to an array of services provided by the major vendors. For example, Cloud Run in GCP, or Azure Container Apps if you are using Azure, is a serverless and managed service offering which lets you run containerized applications. These services are fully managed and provide the ease of use in getting a containerized application up and running in a short amount of time.

Another way of deploying applications is Kubernetes using Google Kubernetes Engine (GKE), Azure Kubernetes Service (AKS) or Amazon Elastic Kubernetes Service (EKS). Kubernetes is ideal if you need fine-grained control over the configuration of applications including storage, security, and monitoring.

Resource management

Resource management in containerized applications is essential for optimizing performance and ensuring efficient resource utilization. With the dynamic nature of containerized environments, it is crucial to implement strategies that enable effective allocation and monitoring of resources. One approach is to leverage container orchestration platforms like Kubernetes, which offer built-in capabilities for resource management, including CPU and memory limits, as well as auto-scaling based on resource usage metrics. By defining resource constraints at the container-level, organizations can prevent resource contention and ensure fair allocation across workloads. Additionally, monitoring tools such as Prometheus and Grafana enable real-time visibility into resource usage metrics, allowing operators to identify bottlenecks and proactively adjust resource allocations as needed. Implementing resource management best practices not only improves application performance, but also helps optimize infrastructure costs by ensuring that resources are allocated efficiently based on workload demands.

Overhead in using Kubernetes vs a managed service

Kubernetes as an open-source container orchestration system is a popular solution for managing and scaling containerized applications. While Kubernetes provides high configurability, this could also be seen as a challenge. Although GKE (Google), AKS (Microsoft) and EKS (Amazon) are managed Kubernetes services, sometimes the overhead is too big than running the application simply on one of the serverless platforms. It is far simpler to use Cloud Run and the threshold to get an application up and running on it is lower than that of Google Kubernetes Engine. Evaluating the specific requirements and constraints of your application will help you determine the most suitable platform for your needs. While setting up Kubernetes for just one workload might be an overkill, using Cloud Run for a large application with numerous micro-services and the need for fine tuning the resources may not be reasonable either.

To sum it up, Kubernetes might be useful when:

You need microservices which require individual scaling

You need the flexibility to run applications on multiple cloud providers

You are motivated to automate provisioning of your Kubernetes resources using tools such as Helm

While Kubernetes is a powerful tool, it might not be right for every use case. Here are some of the use cases where you could do without Kubernetes:

Simple and small-scale applications

Applications which can be decoupled and deployed independently in separate containers

In conclusion

Containerization offers a transformative approach to application deployment, enhancing portability, scalability, and management efficiency. By encapsulating applications and their dependencies into isolated containers, organizations can achieve greater flexibility and agility in their software development and deployment processes.

The decision of which applications to containerize depends on various factors, but ultimately, the choice between platforms hinges on the specific needs and objectives of each application. Whether aiming for fine-grained control and flexibility or seeking simplicity and ease of use, organizations must carefully evaluate their requirements to determine the most suitable approach for containerizing and deploying their applications effectively. By leveraging the strengths of containerization while aligning with their operational capabilities, businesses can unlock the full potential of modern application development and deployment practices.